Put 'research shows' in front of a scary percentage and people start sharing links like it's 2008…

Put 'research shows' in front of a scary percentage and people start sharing links like it's 2008 Facebook.

Passkeys can be stolen! New “research” claims attackers can extract them from any device.

Except if you read the paper, it's like claiming you can hack someone's password by looking over their shoulder while they type it.

This isn't an isolated thing either.

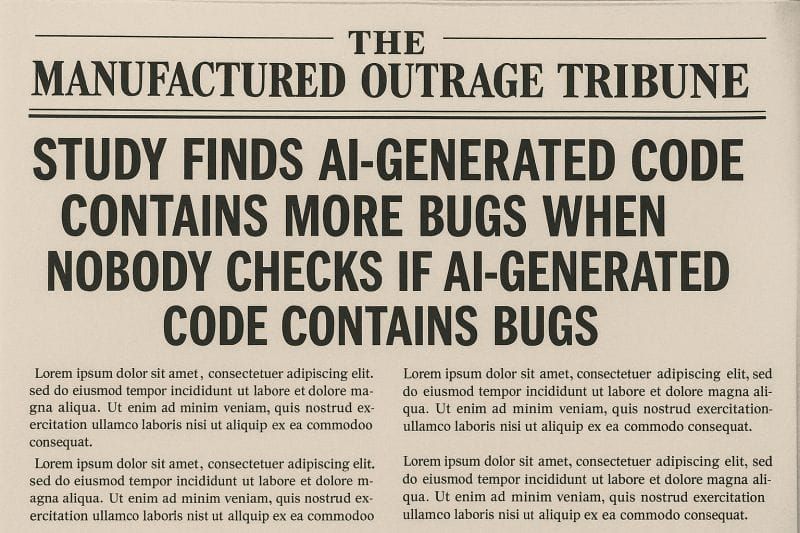

There’s the paper claiming AI-generated code gets 37.6% more vulnerabilities.

“More than what?” you ask?

Don’t bother, the paper doesn’t even say.

It might still sound terrifying until you realize they tested a... less than realistic scenario: 10 consecutive AI iterations of just “Optimize this” with zero human review in between, done with C, where memory bugs are like cockroaches in a rundown Miami motel even when humans write it.

Never mind the fact that people writing in C are actually 17.6x more likely to grumble about how bad LLMs are at writing code than they are to actually use it to write code.

The paper essentially proved that doing something stupid produces stupid results.

Revolutionary.

This would all be harmless noise if it weren't stealing cycles from actual security issues.

You don't need to read every paper.

But when a paper reads like 'You won't BELIEVE what happens to AI code (number 7 will SHOCK you)', take 30 seconds to verify it.

Dump it into an LLM. Ask what it actually tested. Check if the threat model makes sense.

You'll usually find the same pattern:

Contrived scenarios. Obvious facts dressed as revelations. Scary percentages attached to non-problems.

The researchers will keep producing this academic equivalent of a participation trophy as long as it works. The media will keep publishing it as long as we keep clicking.

The only part of this cycle we control is our response to it.

The noise has a price.

Every hour spent on fake threats is an hour not spent on real ones.