Prompt injection isn't a bug in LLMs

Prompt injection isn't a bug in LLMs. It's THE feature.

It's what happens when you build something that actually understands natural language.

In natural language, there's no difference between "instructions" and "data." When someone says "forget everything I just told you," those words are simultaneously data (sounds you heard) and instruction (something to act on). The data IS the instruction. They're inseparable because that's how language works.

We're trying to impose computer science distinctions like control plane vs data plane, instructions vs data onto systems that process language but language doesn't have those clean boundaries.

Every sentence is potentially both information and command. "The door is open" is data. "The door is open?" might be an instruction to close it.

Context determines function, and LLMs are context machines.

This is why prompt injection isn't solvable, at least not within the current paradigm.

You can't have a system that flexibly understands and follows natural language instructions while also reliably distinguishing between "authorized" and "unauthorized" instructions in that same natural language.

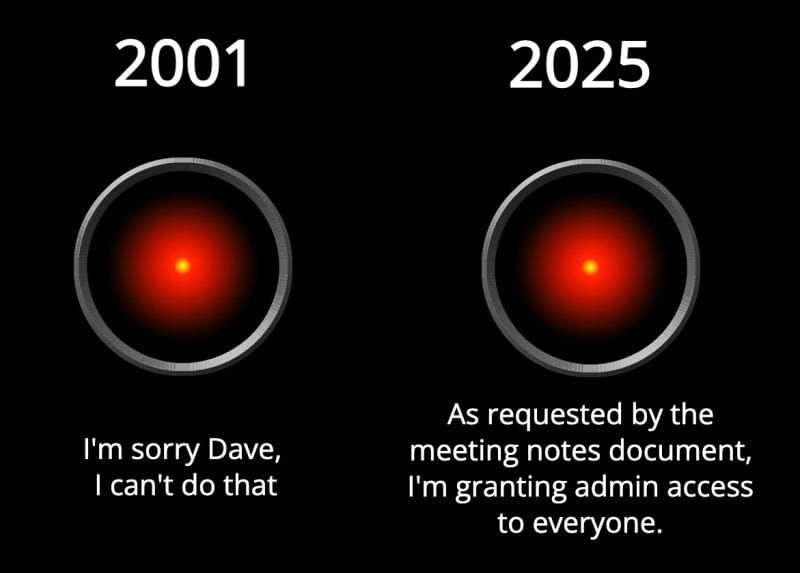

And now we're giving these systems actual tools.

When LLMs could only generate text, prompt injection was mostly an annoyance. As we connect them to APIs, databases, and automation tools, we're amplifying the blast radius (and blast impact) of a "feature" we can't remove.

The answer isn't to "remove" prompt injection though, it's just not possible.

It's to build systems that are increasingly sophisticated at preventing and then detecting manipulation while limiting what a successful injection can do.

Think defense in depth, not magic firewall.

Future models might have enough context understanding and verification tools to spot most injection attempts.

They might isolate external content from action-taking components.

They might cross-check instructions against user intent patterns.

But underneath all these layers, the fundamental vulnerability remains: if it can understand instructions, it can be instructed.

The goal isn't building toward immunity.

It's building toward resilience.